Conversational AI for performance evaluations

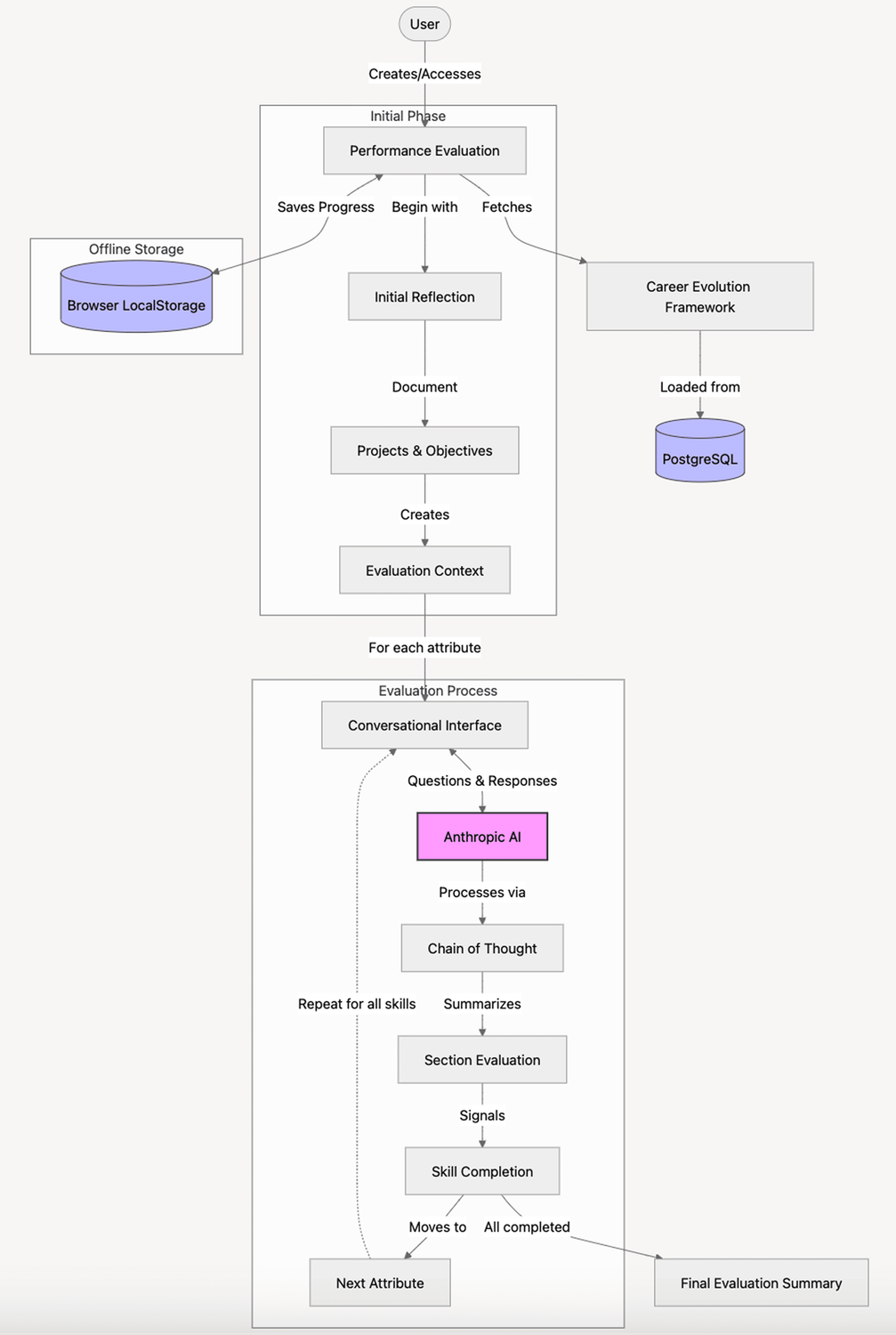

We built an offline web application that leverages our career evolution framework to guide performance evaluations through a conversational AI approach.

The story

At Think-it, we have a comprehensive performance evaluation process based on our career evolution framework. This framework spans engineering and non-engineering roles, containing between 13-16 attributes across different career paths. The framework includes an initial common path for levels 1-2, then branches into three specialization paths (junior management, team leadership, individual contributor) for levels 3-6.

The existing performance evaluation process was time-consuming and tedious, requiring navigation between Google Sheets, Notion, and small improvements tools. Team leads and direct reports needed to spend significant time ensuring fair and complete assessments, but the framework's complexity made this challenging. We needed a solution to streamline this process while preserving the rigor and fairness of our evaluations.

Think-it’s role

Rather than generating evaluations from scratch, the AI synthesizes user inputs, rephrases them for readability, and guides the conversation through a chain-of-thought process (thinking, summarizing, ideating the next step). When sufficient information is gathered, the bot signals completion for that skill area, and users move to the next one until a complete evaluation is formed.

We tackled three core ethical AI concerns head-on:

- Privacy: We implemented a completely offline solution using managed services with no data retention.

- Bias Mitigation: We ensured gender-neutral interaction with the model by avoiding names and gendered pronouns.

- Black Box Problem: Instead of asking the AI to generate content directly, we used a Socratic approach where the AI asks questions to prompt user reflection.

This approach to AI ethics made the project stand out from traditional software development work.

Tech stack

Why it mattered

While an initial prototype had been built months earlier during a team member's sabbatical, the production version was developed within a compressed two-week sprint.

The most remarkable aspect was the elegant simplicity coupled with its effectiveness. Despite its proof-of-concept nature, it successfully created a more cohesive performance evaluation pipeline.

The project proved that we could apply AI ethically and effectively to internal collective processes. It opened doors for future applications, such as ingesting materials from one-on-ones and project feedback using an AI agent approach.